Background

I use a lot of EC2 Spot instances and for audit/debugging purposes I like to keep track of EC2 instance state changes. I had a need to be able to quickly see when an EC2 instance was launched and terminated. This information is available in CloudTrail but I needed a quicker way to lookup instances by their id.

Initially, I had setup a SNS topic to notify me of state changes. However, that got annoying after a while when I started getting too many e-mail notifications. It was not easy to filter/sort out information for the instance I was interested in.

So, I decided to use DynamoDB to store instance state changes. I used the Serverless framework for setting up a CloudWatch Event which picked up all EC2 instance change states and passed it to a Lambda Python function to store the event details in DynamoDB.

I did not want to end up with hundreds of items in my DynamoDB table, so I also added a time to live for the items. This allows me to keep the size of my table small.

This post is intended to show how setting DynamoDB TTL with Lambda Python is easy. It can also be a reference for using the Serverless framework, setting up a CloudWatch Event and creating a DynamoDB table with Time To Live enabled.

Requirements

- Make sure you have installed the Serverless Framework on your workstation.

- Make sure you have all the required IAM permissions to publish your function and create DynamoDB table.

Procedure

To create the template for our work, I run the commands as shown below.

sls create --template aws-python3 --path ec2logger

cd ec2loggerHere you will see two files serverless.yml and handler.py, I are going to update these files with our code as shown below.

service: ec2logger # NOTE: update this with your service name

provider:

name: aws

runtime: python3.7

environment:

DYNAMODB_TABLE: ${self:service}

stage: prd

iamRoleStatements:

- Effect: "Allow"

Action:

- dynamodb:Query

- dynamodb:Scan

- dynamodb:GetItem

- dynamodb:PutItem

- dynamodb:UpdateItem

Resource: "arn:aws:dynamodb:${opt:region, self:provider.region}:*:table/${self:provider.environment.DYNAMODB_TABLE}"

functions:

ec2logger:

handler: handler.ec2logger

memorySize: 128

timeout: 5

events:

- cloudwatchEvent:

event:

source:

- "aws.ec2"

detail-type:

- "EC2 Instance State-change Notification"

enabled: true

# you can add CloudFormation resource templates here

resources:

Resources:

TodosDynamoDbTable:

Type: 'AWS::DynamoDB::Table'

Properties:

AttributeDefinitions:

-

AttributeName: instance-id

AttributeType: S

-

AttributeName: time

AttributeType: S

KeySchema:

-

AttributeName: instance-id

KeyType: HASH

-

AttributeName: time

KeyType: RANGE

ProvisionedThroughput:

ReadCapacityUnits: 1

WriteCapacityUnits: 1

TimeToLiveSpecification:

AttributeName: ttl

Enabled: 'TRUE'

TableName: ${self:provider.environment.DYNAMODB_TABLE}

Let us do a quick review of our serverless.yml file.

I am setting an environment variable which is the same name as the service. This name will be used to create a DynamoDB table. The table name being ec2logger.

iamRoleStatements is where I define what the Lambda can do with the DyanmoDB table that is going to be created.

The events section is where I define the CloudWatch Event. If you are only interested in some events, you can modify this section to trigger the rule only on them. I am interested in all EC2 state changes.

In the resources section, I am setting up my DynamoDB table. My partition key is instance-id and sort key is time, which is the instance state change time. Time To Live is ttl and it is enabled.

Edit the handler.py file as shown below.

import json

import time

import boto3

dynamodb = boto3.resource('dynamodb', region_name='us-east-1')

table = dynamodb.Table('ec2logger')

days = 14*60*60*24

def ec2logger(event, context):

ttl = int(time.time()) + days

if 'instance-id' in event['detail']:

response = table.put_item(

Item={

'instance-id': event['detail']['instance-id'],

'time': event['time'],

'state': event['detail']['state'],

'ttl': ttl

}

)

body = {

"message": "logged sucessfully!",

"input": event

}

response = {

"statusCode": 200,

"body": json.dumps(body)

}

return responseI am setting Time To Live as 14 days and I only store the instance-id, state and time from the event passed to the Lambda function.

The code above is simple and lacks error/exception handling. Please add appropriate exception handling to suit your needs. Modify the region to make sure it matches where you do your work.

Deploy

After editing the code, go ahead and deploy your function using the command shown below

sls deployThis will trigger the process of creating the CloudFormation scripts which in turn will create a Lambda Function, an IAM role for your function and a DynamoDB table with Time to Live enabled.

After the resource creation completes, go to CloudFormation to see the stack information and what was created, to Lambda to see your function, to Cloudwatch Event Rules to see the rule that was created and finally the DynamoDB table.

Testing

If you have a test EC2 server in your account, you can go ahead and start it. This will trigger the CloudWatch Event, which in turn will run the Lambda function to store state change in DynamoDB.

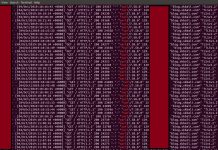

In my case, I started an EC2 instance and then after few minutes stopped it. The image below shows the state recorded in DynamoDB.

If you no longer wish to keep this function you can run

sls removeThis will remove all the resources that were created earlier.

Instead of doing 1 RCU and 1 WCU, I’d recommend setting the table to on-demand mode. You do this by replacing the section in the yaml file where you designate provisioned capacity with “BillingMode: PAY_PER_REQUEST”.

Thanks for your feedback. This will be very helpful for those who need higher capacity!