Background

In this post I am going to demonstrate how to use the AWS Encryption CLI to perform client side encryption and decryption of files in a folder. AWS makes it easy to keep data encrypted at rest in S3. However, this alone may not be enough when one needs to store confidential data.

In my current project, I come across sensitive data that must remain encrypted at all times even when retrieved from S3. The data is downloaded from S3, decrypted, and processed and removed from local storage immediately. The window for which the un-encrypted data is available is very short.

The AWS Encryption SDK is a client side encryption library designed to make it easy to encrypt and decrypt data using industry standards and best practices. For more information see the links I have provided at the end of this post.

In my demo I am going to show the effect of using Data Key Caching. Please consult the Data Key Caching link below to learn if it makes sense for you or not. You have to evaluate the implications of using Key Caching and the impact on security.

Data Key Caching may reduce the number of call to Key Management Service (KMS) but then it also means that a key is being used more than once to encrypt files.

Installation

To install the AWS Encryption SDK follow instructions from this page.

Procedure

If you do not have a Customer Managed Key (CMK) then lets start by creating one. Also, set the ARN of the Key in a variable for future use.

aws kms create-key \

--tags TagKey=Purpose,TagValue=Demo \

--description "Demo key"

export cmkArn=arn:aws:kms:us-east-1:xxxxxxxxxxx:key/7350e90d-aee2-XXXXXXXXXXXXXX

Let us go ahead and create some data that we will use to encrypt and decrypt. First I will create a random data filled file and then split it into many parts in a directory which we will later encrypt.

mkdir encrypt decrypt data

dd if=/dev/urandom of=./xyz.bin bs=1024 count=1000000

cd data

split -b 5120000 -a 8 ../xyz.bin

I used ‘dd’ to create a file filed with random data and used the ‘split’ command to split the file into 200 smaller files in the data directory.

Next, we will encrypt all our files and time the command and check how many keys were generated.

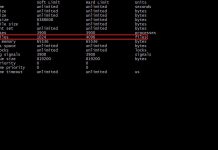

sbali@sbali-700-230qed:/data/sbali$ time aws-encryption-cli --encrypt --suffix \

> --overwrite-metadata --metadata-output /tmp/encrypt \

> --input ./data --recursive \

> --master-key key=$cmkArn \

> --output encrypt

real 0m26.931s

user 0m20.632s

sys 0m0.915s

Let us review this command a bit.

- The blank –suffix indicates I do not want any suffix added to the encrypted files.

- The next option related to metadata indicates where the CLI should output metadata related to the command. We will examine this output in a bit.

- The –input points to the directory where our 200 split files are located.

- The –master-key option uses the variable we set earlier containing the Arn of the CMK Key

- –output is the folder where all the encrypted files were created.

Let us examine the metadata output using ‘jq’ to see how many Data Keys were used.

jq ".header.encrypted_data_keys[].encrypted_data_key" /tmp/encrypt | sort -u | wc -l

200

So, the output tells us that each file had its own unique key for encryption. Let us see what happens if we use Data Key Caching. Remove all the encrypted files and let us run the command again with key caching parameters added.

rm encrypt/*

time aws-encryption-cli --encrypt --suffix \

> --overwrite-metadata --metadata-output /tmp/encrypt \

> --input ./data --recursive \

> --master-key key=$cmkArn \

> --output encrypt \

> --caching capacity=1 max_age=10 max_messages_encrypted=100

real 0m20.201s

user 0m18.976s

sys 0m0.922s

jq ".header.encrypted_data_keys[].encrypted_data_key" /tmp/encrypt | sort -u | wc -l

2

The output now shows that only two unique data keys were generated which in turn were used to encrypt all the files and the entire operation was a bit faster and took approximately six seconds less to complete.

- –caching capacity=1 implies only one key should be in cache.

- max-age=10 implies a key could be reused for upto 10 seconds.

- max_messages_encrypted=100 implies a maximum of 100 files could be encrypted with one key.

You may or may not want to use Data Key Caching. Please review its security implications. If you are working with KMS a lot, this will keep the API calls to KMS low, giving you better performance and making sure you are less likely to hit any KMS limits.

Let us now look at the decrypt command and review its performance with key caching.

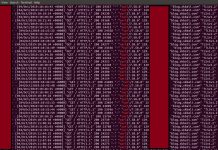

time aws-encryption-cli --decrypt --suffix \

> --overwrite-metadata --metadata-output /tmp/decrypt \

> --input ./encrypt --recursive \

> --output decrypt

real 0m28.911s

user 0m22.953s

sys 0m0.984s

/bin/rm -rf decrypt/*

time aws-encryption-cli --decrypt --suffix \

> --overwrite-metadata --metadata-output /tmp/decrypt \

> --input ./encrypt --recursive \

> --output decrypt \

> --caching capacity=100 max_age=100

real 0m22.664s

user 0m21.458s

sys 0m0.914sWith Data Key Caching, we were able to complete the task a few seconds faster. The metadata output cannot be used to count the calls to KMS, however you can query AWS logs to see how many calls were made to KMS.

You can use a command similar to this to see your KMS calls.

aws logs filter-log-events --log-group-name CloudTrail/DefaultLogGroup \

--start-time $(date -d "-60 minutes" +%s000) \

--query 'events[].message' --output text |\

jq ' . | select(.eventSource=="kms.amazonaws.com") | select(.sourceIPAddress=="x.x.x.x") .eventTime'

"2020-01-03T21:45:29Z"

"2020-01-03T21:45:39Z"

Use your correct ip address. Note: If you run this on an EC2 instance the ip address will probably not work and you may have to use a different filter to query the logs.

Let us verify if our process worked correctly. We can verify the original and decrypted file using its sha.

sha256sum d*/xaaaaaaaa

2bcaa9db16bb956573fa3e23b6798137997ba2048cde62149915bc2d90f57326 data/xaaaaaaaa

2bcaa9db16bb956573fa3e23b6798137997ba2048cde62149915bc2d90f57326 decrypt/xaaaaaaaa

The above shows that the files match. I only did it for one file, but you can easily write a for loop to verify all 200 files.

Let me know if you have any questions about this post by commenting below.

Further Reading

- What is AWS Encryption SDK?

- AWS Blog on Encryption SDK

- Data Key Caching

- AWS Encryption SDK Command Line Interface

- KMS CLI

- AWS KMS

- How the AWS Encryption SDK Works